Day 45: Moment Generating Functions

There wasn’t a good visual for what we are talking about today, so how about a joke instead?

Well from the views it looks like my function of a random variable example was a big hit. Hopefully we’re clearing a lot of the confusion up now that we are looking at some examples of how to solve these and we can get a better grasp of how this works. Today we’re going to introduce a new concept, this one is going to be a bit more complex than our last, so you may want to review our last post before we start.*

If you are all caught up from last post, we looked at how to take a function of X and find some function Y that transformed X and which we called g(X). Now, that was just one way to solve this type of problem and there are typically three ways to solve these. The way we solved this equation last post is called the method of distribution functions, functions because what we ended up doing is finding three different functions for the three different situations (in that example, in some cases you can do it with two). From that we did some math to determine our pdf by taking the derivative of our CDF.

Today we are going to define a new way to solve these types of functions called moment generating functions. Next up we can look at an example using moment generating functions to solve a function of a single random variable, but since this is a somewhat roundabout concept, I thought it best to break it up into two posts, one introducing the concept and one going over the example of how to use it. Okay enough talk, let’s define some things.

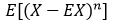

First up what the heck is a moment? When it comes to random variables, the nth moment is defined to be

Now at first glance this may be confusing because we are introducing another new concept and that is this E[ ] opperatior. Well that is called the expected value and E[X] would be read as the expected value of X. We haven’t gone over expected values and there is a good reason. We already covered expected values! No really, an expected value is the mean of the random variable. Okay, so what about the inside bit, the X to the n part? Well that’s a little different, but that’s because the value of n dictates what we are talking about. if n = 1 then we are talking about the mean, but say we want to find n = 4, well that is kurtosis (a fancy way of defining the shape of the pdf and is a measure of how much probability is in the tails of the distribution, don’t worry, if this isn’t making sense, just know it exists).

We also need to define another concept, the nth central moment. That is defined like this

In this case we are just subtracting our value X by the mean of X, if n = 2 in this equation, then you are describing the variance of X. So what we have is two mathematical ways to write mean and variance using a compact formula, that’s it. So to sum it up, moments are just different mathematical quantities we can use to describe our random variable by and we have this very formal way of writing it instead of saying mean(X) we say E[X], because as I always say, we are lazy so why write out mean when we can say the same thing with one letter?

Okay, so now that we have that bit out of the way, you may be asking what is a moment generating function? Well, let’s define that concept and then next post we can use it in an example. We say that the moment generating function (MGF) of a random variable X is a function written as M(t), and note I dropped the X to write it here in the text, but it is defined more accurately like this

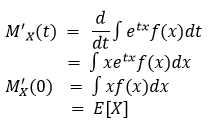

which is defined for values t in a region about zero, where -a < t < a for some value of a. Note that a is our values where there is something going on (remember how we defined the range of our pdf and CDF last example?). So why is this useful, well if we plug in t = 0 we find that M(0) = 1 (since e to the power of zero is 1). Next we can differentiate M(t) with respect to t (if we assume X is continuous in this case) and we find

(where we assume that we can take the derivative inside the integral)

Notice that the first derivative is just our mean. This is good since that is what we were expecting to pop out. Also, notice that the e value goes to 1 because e to the zero is 1 (there is a t in the exponential and we set it to zero). Now if we take the second derivative we should be able to have the variance pop out (which is why we defined these two concepts at the beginning), let’s take a look at that.

So a big secret about me, or maybe not a big secret since I share this fairly frequently, I’m bad at math. Not in the I can’t do it (obviously as a PhD student in an engineering program with a BS and MS in mechanical engineering, I can do it), I just have trouble grasping concepts as quickly as my peers. That said, you have to admit this is a pretty cool trick and one that isn’t too difficult to follow along if you have an understanding of calculus (even just a basic one for these derivatives and integrals).

Okay, so now we’ve very thoroughly covered what a moment generating function is and why it works. Next we will apply this knowledge and look at how we can solve a function of a single random variable. Hence why the last post is called part 1. Hopefully this made sense, if not it should be better when we go over our example.

Until next time, don’t stop learning!

*My dear readers, please remember that I make no claim to the accuracy of this information; some of it might be wrong. I’m learning, which is why I’m writing these posts and if you’re reading this then I am assuming you are trying to learn too. My plea to you is this, if you see something that is not correct, or if you want to expand on something, do it. Let’s learn together!!

What happens in the lab doesn't have to stay in the lab!

Related

This entry was posted on October 3, 2019 by The Lunatic. It was filed under 365 Days of Academia - Year one and was tagged with cumulative distribution function, Education, expected value, Function of random variables, Math, mathematics, Moment Generating Functions, moments, probability, probability density function, random variables, statistics.

This site uses Akismet to reduce spam. Learn how your comment data is processed.

But enough about us, what about you?