Day 55: One Function of Two Random Variables Example 2

Don’t worry, the image ties into what we are doing today, I promise!

Well our last post we took a break and talked zombies! While I would love to do a whole month of halloween topics, this year is not the time, maybe next year. In any case today we are going to go over another example of a single function of two random variables. This is going to be slightly more complex than our first example, however it won’t be extremely complex (we’re working towards it). So let’s take a look shall we?*

For those of you just joining us, we’re talking random variables, which started way back here. We then introduced functions of one random variable. Next, we went on to introduce two random variables. That brings us up to speed, so let’s get started with our example!

For this example, let’s say you’re a restaurant owner and you know that the number of customers visiting in a given day can be modeled using the variable N ~ Poisson(λ). The probability that a customer will purchase a drink is p and that value is independent of other customers and is independent from the value N.

To model this, we need to represent the people who buy drinks, so let’s say that is our X and the people who do not buy drinks is Y; this way we can say that X+Y = N. Say we are interested in a few things: the marginal pdf of X and Y and the joint pdf of X and Y. Further, let’s say that we want to also want to determine

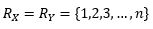

First, let’s look at the range of our functions,

Easy enough, we know that the range of our functions is going to be the same since we are looking at the same thing for both, so in this case since we didn’t specify guests in particular we set it to the maximum possible values (1 and n where n = total customers in the day for that particular group). Note that just because the range is equal the values don’t need to be, for example not everyone will buy a soda and it most likely won’t be 50:50 split of people that buy a soda and people that do not buy a soda.

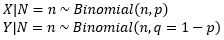

Now, let’s set our N = n and we know that X is the sum of n independent Bernoulli(p) random variables. p in this case is the probability they will buy a soda so we can say the same thing for Y, but we need to have the probability they will not buy a soda (q), which is just 1-p. From this we now have

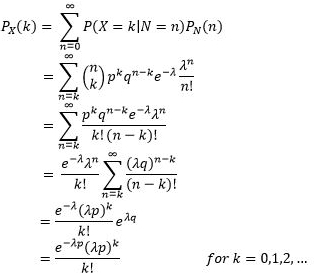

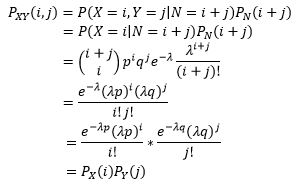

We’ve now set up the first question! Now, lets solve, by using the law of total probability we know that (step 1)

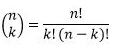

Substituting in our equation into the function we get step 2. n choose k is expanded such that

and we end up with what you see at step 3. Next we can pull anything out that isn’t a function of n since that is the value changing when we sum everything (step 4). Also please note that the λ to the power of n is incorrect, it is λ to the power of k. There was an error in my equation that I didn’t catch in time, which if you keep following you will see that I corrected myself as we went on. If I go back and fix it, I will remove this note.

You’ll notice (Step 5) that we used the same technique we saw a while back when we derived the Poisson normal approximation and you can read how we did that there, but that is where the e to the power of λq comes from. We can relate p and q (remember that q = 1-p) so we can simplify the equation to what you see in step 6.

Okay so that answers the first of our questions, the marginals (remember if we know one we know the other and we are lazy so we now know both without having to completely derive the same equation twice).

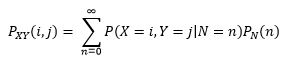

Well we also wanted to determine the joint pdf of X and Y, so let’s see how we would go about solving that! In this case we use the law of total probability again (it’s just this function and maybe we will cover it later, maybe not), in any case, we see that

However, we can use something to our advantage, we know that P(X=i,Y=j|N=n)=0 if N≠i+j and because of that

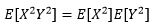

Notice anything interesting? Do you… do you?!?! Well we just found out that the joint pdf is just the multiplication of the two individual pdf, meaning X and Y are independent. We sort of knew this was coming given the setup, but we’ve derived it all on our own. I’m not sure that I need to go through this step by step, because it is somewhat like what we just saw without the summing involved (which is how we get the marginal remember). So because X and Y are independent, on the next portion of the problem we can reframe it like this

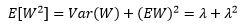

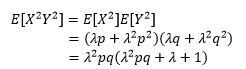

Which, if you remember our expected value has a function attached to it, you can read about that here. In this case we need to note that for a Poisson random variable W with parameter λ we can rewrite

making this a whole heck of a lot easier to solve like so

Told you we were going to get a little more complex today! Notice that we just expanded out our expected function in the rewrite case (above this) and used that relationship to solve for the joint case (step 2). Then we distribute and simplify to get to step 3.

So there you have it. Reminder, I’ve got my exam coming up and while this is good practice for me, I do need to study so don’t be surprised if tomorrow is quite a bit shorter. In any case I hope we’ve cleared up a lot here and we will look at some other examples moving forward. After my exam we can look at some new topics.

Until next time, don’t stop learning!

*My dear readers, please remember that I make no claim to the accuracy of this information; some of it might be wrong. I’m learning, which is why I’m writing these posts and if you’re reading this then I am assuming you are trying to learn too. My plea to you is this, if you see something that is not correct, or if you want to expand on something, do it. Let’s learn together!!

But enough about us, what about you?