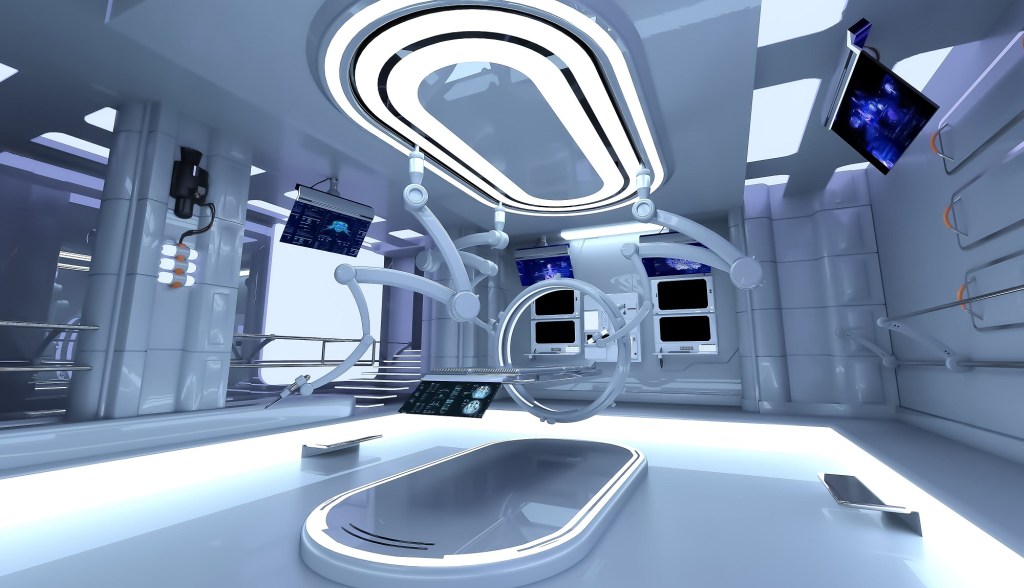

Return to the OR

It’s been a minute since I’ve had an OR experiment. To be fair there’s a lot going on and with the stuff we’ve been doing there hasn’t been time. But there’s also the participant factor. People need to agree to be part of the study and even before then we need to make sure they are a good fit, so there’s a lot of factors for why we haven’t done one for some time. In any case that is quickly about to change!

This week it’s back to the OR! And like any good researcher I’m in prep mode for that on top of the prep for the long experiment we have coming. OR experiments have a comparatively easy setup. Mostly it’s just connecting equipment, making sure everything is working correctly, then waiting until it’s our turn to do our thing. So while the prep time isn’t extravagant, it’s long enough that I need at least a few hours of time to get everything together.

With everything going on, other experiments, other researchers needing the equipment, things like that, I can’t just grab the stuff I need whenever I want. No, I need to schedule around others, so tomorrow I plan on setting everything up for the OR in the hopes that no one will take the equipment apart because they need it. I’ve looked closely at the schedule and no one has anything planned, but research is fluid so it’s a risk, but one I’m willing to take.

For those who don’t remember, and so I can remind myself, OR experiments have a particular flow to them. We’re guests in the space, so we need to be mindful of the fact that there are others doing their jobs and allowing us the chance to be there to do our thing. It’s mutually beneficial, don’t get me wrong, but it’s a research initiated situation so it would be rude if we made things difficult. Instead, we attempt to make things as simple as possible for everyone involved. It works out for the most part.

Like I mentioned, it’s mostly a hurry up and wait situation, until it’s not. We go in, setup some equipment, they setup for their stuff, then we hang out until it’s our turn to do the research. That can be anywhere from 30 minutes to hours depending on how things go and what we’re doing while we’re in the OR. Our collaborator, surgeon-PI will be the one helping us get the data we’re after. Usually it’s fun, but sometimes it can be stressful. You never know what to expect.

I like doing research like this because it’s stuff that’s applicable today instead of stuff that could be helpful decades down the line. It’s not that research that is decades from patient use isn’t beneficial or helpful, because it is. It’s very helpful and can be incredibly impactful. But most people want help now too, not later and the stuff I’m working on in hospital-PI’s lab is stuff that can be applied pretty much right away. There’s not a lot that we do that can’t be used by people tomorrow if they wanted. There’s simply the question of how to apply it better.

Most of the research we do looks at how we can do things better. All (well most) of what we do in the lab is non-invasive, so it’s safe for anyone, within reason that is. Since it’s safe (again, within reason), the barrier to go from the research side to the clinic is extra low and the potential to help people is extra high. Is what we do going to cure diseases? No, certainly not. But that’s not the goal. There’s a large gap between cure and where a person starts, our goal is to help bridge that gap, even if it isn’t perfect.

Experiments like this is just another way we try to find ways to close the gap, or at least narrow it. Because if you ask a person to wait ten or more years for a cure, they’ll do it, but they wouldn’t say no to improvements to their condition today. That’s part of the reason I was so drawn to the clinical research side vs. the stuff I do in the school lab. Both are great, don’t get me wrong and I love what I do in both labs. I just know that there are people who are looking for something, anything, now that will help them and as a researcher while finding a cure is important, we shouldn’t let perfection stop us from providing meaningful relief.

Anyway, slightly off topic, but I did want to discuss it since I think it’s important. As for the stuff in the OR, I wish I could share more details, but again it’s all very hush, hush. So for now, you just get to hear me talk about it all vague like. On the brightside, I’ve had several publications recently where I finally got to talk about the work I do, so at least I share the secrets once they are out there and you get all the behind the scenes stuff that goes into the papers before they are released.

It’s an interesting tradeoff … whether to invest more heavily in immediate mitigation or comprehensive cures for the future. I’m inclined to agree that putting effort into both is good, if only because it’s difficult to predict which approaches will bear fruit. Maybe we make people wait ten years for a cure, and it doesn’t materialize … well then we’ve wasted time we could have spent at least giving them something. Not to mention that helping people now improves our overall effectiveness as a society and maybe gets us the big advances faster.

This makes me curious whether you’ve heard anything about “longtermism,” and what you think of it. It’s a fairly recent movement whose core idea is that there might be a lot more humans in the future than there are right now (because our species could last a long time and/or colonize the near galaxy), and therefore, ensuring the existence and welfare of those hypothetical future people should be a moral priority … perhaps to the point of neglecting concerns about people (and animals, and nature) alive today.

Preventing human extinction at the hands of future AIs is one issue that longtermists tend to obsess over; that’s probably why I end up hearing about it a lot. Some people think this really isn’t getting enough attention given the potential for severe catastrophe; others say the movement is a way for the wealthy to put money into their pet tech projects and call it “charity.”

I’m still learning about it, but at the moment I’m not exactly a fan. Planning for the future and not being seduced by instant gratification is good, but … invoking enormous numbers of people who might exist someday feels uncomfortably like a way to skew the moral calculus any direction one wants.

LikeLiked by 1 person

September 27, 2022 at 12:23 am

Yeah, I think we need to do both honestly. Long-term projects that could create a cure or something amazing is great, but that does nothing for the people today. Sort of like how fusion is always just 20 years away, sure when it gets here it will revolutionize power, but until then it’s great to push shorter term solutions that can bridge the gap so we’re not polluting the one place we have to the point that we can’t exist.

I haven’t heard about “longtermism” honestly, but the way you described it instantly made me think of Roko’s Basilisk. Okay, maybe you don’t want to watch that video though…. bwahahaha, sorry I couldn’t help myself (https://www.youtube.com/watch?v=ut-zGHLAVLI). It’s not a perfect comparison, but the AI comment in particular made me think about it.

I agree that we should plan for the future, afterall we want a world that can sustain life for generations (I would hope!!!!!), but when we’re only focused on the future, today’s problems can be put off for tomorrow’s people and that kind of thinking makes me nervous. I agree that thinking like that could be used to justify just about anything depending on who’s making the justification.

LikeLiked by 1 person

September 27, 2022 at 7:31 pm

I had prior Roko’s Basilisk exposure, but that video was a fun interpretation. I might keep it around in case I find anyone else who needs to fall under the gaze. Thanks? Hahahaha

The longtermist approach to AI is Basilisk-adjacent, I think. There might be an element of “friendly superintelligence will solve human suffering and therefore we’re obligated to make one as fast as possible,” but there’s also “unfriendly superintelligence is sure to destroy humanity, so we’d better hurry up and figure out how to align it before anybody invents one.”

In case you’re interested in learning more, here’s a fairly comprehensive article from the sort of people who might promote Longtermism: https://www.effectivealtruism.org/articles/longtermism

And here’s a really scathing take: https://www.salon.com/2022/08/20/understanding-longtermism-why-this-suddenly-influential-philosophy-is-so/

LikeLiked by 1 person

September 27, 2022 at 9:37 pm

Oh good so I didn’t doom you and any relatives to eternal AI torment by showing you that 🙂

Thanks for sharing the links! I’m reading over them now! I haven’t got far, but it already sounds semi-cultish, but maybe that’s just me. Anything being pushed by Musk tends to feel cultish though IMO.

LikeLiked by 1 person

September 28, 2022 at 7:15 pm