Day 114: Classifier Update

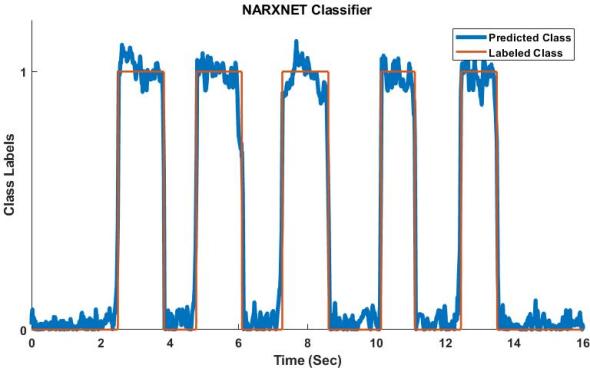

Behold! My amazing predictive power!! *insert evil laugh here*

It’s been a long and exhaustive road to get to here. As you may recall, I’ve given several updates already on my progress and I THINK I’ve finally hit success. In fact, I know I have, the problem now? Well what the neural net saw to make the predictions is a mystery to me, so I don’t know why or how it works… yet.

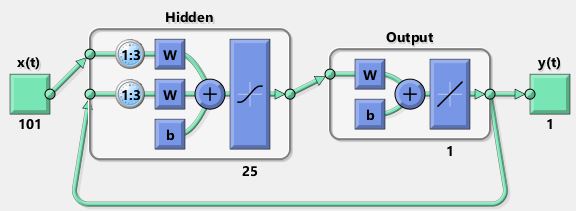

Neural nets are sort of like a black box. To add to the problems (sort of) this was a non-linear neural network (a closed loop NARXNET to be exact). I’ve posed what that looks like before, but if you need a reminder, we are talking about a closed loop neural network that looks like this:

In fact, that is mine. It took a lot of messing around with it to get everything to work properly, a LOT of trial and error, restarts, and adjustments to the setup. However, I managed a 98.8% accuracy, not too shabby if I do say so.

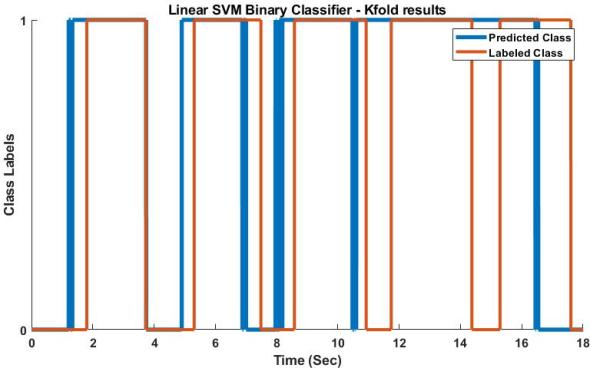

Now, I’ve been promised accuracy like that before, so caution is required. HOWEVER, that was the accuracy I got after I fed it data it had never seen. THAT was what I wanted and I’m super happy with the result. In comparison, my “simple” SVM linear classifier gave me an accuracy of 81% with the training dataset and a 73% accuracy rate with data it had never seen. Not horrible, but not in the 90% range I wanted. Note: SVM classifiers (support vector machine classifiers) are not simple, hence the quotes around it.

Linear SVM classifier results with a test set (unseen dataset) after doing a K-fold cross validation.

Here’s the deal though, I probably won’t be using this for my QE. There was no point, it’s a result I cannot really explain, but it gives me a good start for future work and shows that what I’m doing works. In essence, it’s a proof of concept, not something that I will be sharing with everyone explicitly. Still, it’s pretty freaking cool and gives me something to look forward to.

Until next time, don’t stop learning!

But enough about us, what about you?