Universe 25 – A paradise lost

Utopia, depending on who you ask you almost certainly will get a different answer on what the perfect world would look like. No, not what the world COULD be, but what the perfect world would look like. If you could snap a finger and alter the very fabric of reality to create the perfect world, it would almost certainly look very different than the world someone else would create. Maybe that’s why paradise is best left to novelists. Still, what would happen if we could create a general paradise? This is a story of mice and men.

(more…)Return of the long experiment

It’s been awhile! Things have been busy so I’ve had to prioritize what I wanted to get done to avoid the crash I had after the DARPA event at the end of last year. So there’s a lot of updates coming, obviously. I still want to blog and I hope to get back into the daily routine soon enough, but I wouldn’t be surprised if it didn’t happen this week, because this week it’s the return of the long experiment. So let’s break it down a bit and talk about why this round will be different than the previous versions. Well as much as I can talk about anything I’ve been doing anyway.

(more…)The dissertation update

It’s been a busy few days, but progress has been made and that’s all that matters right now. I knew what I was getting myself into when I started this whole thing and it’s only going to be for a little bit longer (if all goes well) so I don’t mind it so much. Right now it’s more about the timing of everything. With DARPA deadlines fast approaching I want to be certain that I can get at least something done to show at the conference, as usual, even if it’s that what I’m proposing doesn’t work.

(more…)The curious case of something, or nothing?

It was such an obvious gap that I didn’t understand why it was there. Four years ago, almost exactly, I realized that when it came to the research our lab was doing, brain-machine interfaces mostly, that gap existed, I was confused. Surely I was mistaken, but in-depth searching turned up nothing. Coming in I thought the field was mature, everything that could be discovered was and now it was a slow slog of incremental improvements. Yet there it was staring me in the face like a red car in a black car lot. And thus super secret technique (SST) was born.

(more…)A long conclusion

We did it, another “long experiment” in the books. Total time? Twelve hours. Twelve, non-stop. Okay there was exactly one bathroom break, one coffee drank and one protein bar eaten, but I kid you not, that was all there was time for. When I say long experiment, I mean LOOOOOONG experiment. 98% of that is standing, well moving around doing things, so let’s say “not seated” since that’s more accurate. So, was it worth it? Well now, that’s the real question isn’t it?

(more…)The last day of prep

Well I’m not pulling an all nighter today so that’s good news. Two weeks ago we had our first “long experiment,” now we’re going into the second one. Long experiment because it will take all day and I mean all day. We start at 7am and I’ll be lucky if we finish at 7pm, that kind of long. Since long experiment is, well long, I’ve been working on lots of different ways to optimize the experiment. That won’t make it shorter, but I’m hoping it will be less stressful.

(more…)When the assumptions fail

We make simplifying assumptions all the time. Assume the cow is a sphere. Assume a frictionless surface. Assume no air resistance. If you’ve ever taken a physics course, you’ve run into these at least once. Simplifying assumptions work because they get us an answer that is close enough to the “true” answer that we don’t need to do all the extra work of finding a more complex high accuracy solution. We like assumptions because they make our lives easier! But what happens when your simplifying assumptions turn out to be incorrect?

(more…)The next countdown

Well the next long experiment is slowly coming and by slowly I mean AHHHHHHH!!! It’s only a few days away!!!!!! What are we going to do, someone panic and use more exclamation points!!!!!!! Seriously though, we’re counting down to the next long experiment and hospital-PI is already quizzing me to make sure we’re ready. This needs to go off without a hitch, but as we know the best way to ruin a plan is to try and execute it. Well there’s no substitute for practice… I guess?

(more…)The long preparations

As promised, I’m already preparing for the next “long” experiment. This one will be just as long as the previous, if not longer, so we need to be using our time the best way possible. Ideally we’ll be getting all the data, all of it! But to do that we’re going to need a lot of work between now and then. Part of that will be working out some new code to automate a lot of the stuff we’re trying to do, but some of it will involve making things, because of course it will.

(more…)Dissertation data update 2

Yep, we’re still being lazy and just numbering the updates. Maybe I’ll come up with fancy titles, but for now, this works. Plus this makes it easier for me to search back later. So now that I’ve justified my laziness, let’s talk about where we are. We are here. Okay, seriously, currently I’m still in the pre-processing phase, but there’s good reasons for that. Today I may actually finish pre-processing and I’m hopeful I can hammer out at least a rough idea of what we have… maybe.

(more…)Long experiment aftermath

Well yesterday happened and I can feel it. Everything hurts at the moment, but I knew I was going to be sore today so there is absolutely nothing serious planned today besides maybe cooking something for dinner, maybe. Leftovers are a way of life in research, leftovers and protein bars. I wish I were joking, both hospital-PI and I brought protein bars as a “lunch” yesterday, which may or may not have been eaten as we ran from one part of the hospital to the next.

(more…)The looooong experiment

Ever have one of those nights where you KNOW that tomorrow you have a huge thing going on and for whatever reason, you just CANNOT sleep to save your life? Maybe it was because every few minutes I was reminded of something I needed to do prior to the experiment, or maybe it was just because there was already so many things left undone. Whatever the reason, what should’ve been a day for me to get a full night’s rest turned into what amounts to a power nap.

(more…)The day before tomorrow

It’s basically the big day at this point and I still have a bit of work ahead of me before the official kick off. We have a very strict schedule and I need to make sure that things go smoothly, or as smoothly as possible. I’m not going to lie, we’re already off to a bad start. It’s going to be rocky and we have a whole 12 hours or so for the electrical gremlins to get into the equipment. The pressure is on!

(more…)Experimental prep: part 2

I feel like a broken record at this point, buuuuut… it’s been one hell of a day. It’s been non-stop go, go, go and there’s still one day between now and the big event. There’s still a lot of work to be done and I’m not sure how long it will take, but I have a feeling tomorrow will be a very long day. In fact, these next few weeks will be some of the hardest in recent memory and that’s saying something.

(more…)Experimental prep: part 1

Okay there’s a few days between now and the incredibly complex and labor intensive experiments we have coming up on Friday, so there’s a high chance that this will be a multi-part discussion, hence the title of the post for the day. We’re rapidly approaching the big day and there’s so much work that needs to happen I’m starting to get a little nervous that I have enough time to do it all. To add to the chaos, there are other experiments happening, that require equipment that we’ll be using for Friday, so there’s no way to setup early.

(more…)A completed part!

Ideas are perfect, but the first time you realize that idea it’s often a mess. It’s still an amazing thing that didn’t exist, but it’s never as perfect as in your imagination. You have all the parts, everything fits just the way you want, and it works exactly how you expect it to work, until it becomes a reality. Then it just becomes painful.

(more…)Build, then rebuild

Any good idea really begins life as an okay idea. No matter how brilliant the idea, it’s not fully formed, so it’s imperfect. It’s that imperfect nature that makes it seem perfect. You don’t have to look close at the details. It’s what comes later, the taking a blob of an idea and shaping it into something physical, something real, that the idea goes from being okay to being great. Any realized idea in my opinion is a great idea, because you put the work into making it exist and that alone is worth something.

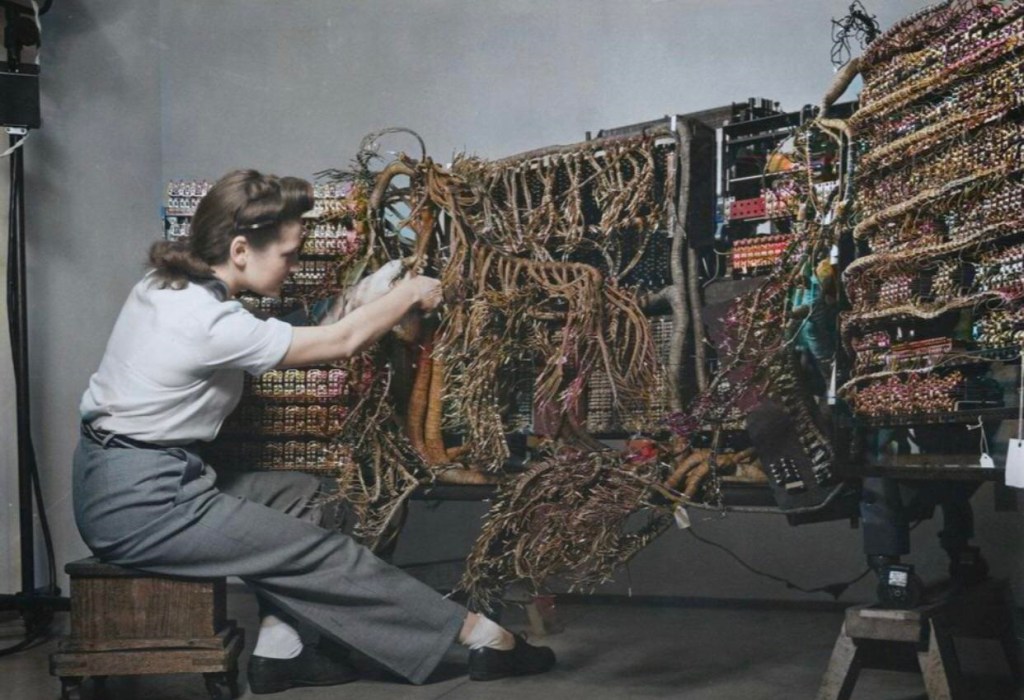

(more…)Adventures in equipment modding

I have it! I finally (as of yesterday) got all the parts needed to build the stuff I wanted to finish for the studies we have coming up. This will be months of thinking through how to improve our setup and improve the noise rejection capabilities of the equipment. A LOT of planning went into the design and now that I have all the parts for assembly it’s time to earn my keep. But it’s not all smooth sailing.

(more…)Dissertation – The first aim

Okay, with my data firmly in hand (so to speak), I’m ready to start tackling my first aim. Despite having all my data collected however, I’m incredibly behind schedule, by about four months if I check my handy proposed timeline. So it’s a race to catch up if I want to graduate on time and oh do I want to graduate on time! Then there’s the other issue, I have the DARPA Risers conference coming so it would be nice to show something new, that’s only a few months away so the race is on…

(more…)Success! 10 of 10 dissertation experiments

It has been a struggle and I really, really don’t want to ever have to do something like that again, but after a few short weeks (well what feels like short), I now have 10 out of 10 of the datasets needed for my first aim. Will there be an aim 2 data collection? Maybe, if what I’m proposing turns out to actually work. In any case, this is now done and I can focus on the next step.

(more…)Success! 8 of 10 dissertation experiments

Well today was the day that would never end. I only had one experiment today, but it took the whole day. To be fair, we didn’t start until the afternoon, but it was well into the evening when we wrapped up and that was exhausting. Since it’s been such a long day, I’ll recap how things went. For everyone who’s interested, but also for myself since I hope this will help with my dissertation writing sooner or later.

(more…)The last participants

Well I had other topics in mind for the day, but I’m so excited that I wanted to make a little post about it. I’ve finally confirmed the last three participants for my dissertation project. I have 7 out of 10 datasets collected and needed three more for this first aim. For the past few days I was nervous that I wasn’t going to get it done this week. Sometimes that happens, things don’t work out how you plan, but that isn’t the case! I’ve confirmed all three participants for my project.

(more…)A timeline revisited

Balance, it’s all about balance. Right now I have school on one side, the hospital on the other. So far things have been good and in order to keep the balance, hospital-PI and I had a long discussion about the big idea I had almost a year ago and we agreed that the pace is going too slow. So it’s time to hit the turbo button. But what does that look like and where do we go? This is a tale of collaboration, combining different studies, and me, stuck in the middle of it all.

(more…)Experiment juggling

We’re off to a busy week, not that the previous few weeks haven’t been busy mind you! Since I started my dissertation data collection it’s been non-stop, but there’s a lot of other things going on too. In fact, I’ve got experiments on both ends, the hospital side and the school side, so the next few weeks will be just a touch busy! I just got word today that we’re starting another set of experiments and we now have additional experiments later on next month, and by next month I mean next week.

(more…)Experimental preparations

Finally. I’ve got not one, but two dissertation experiments lined up one for tomorrow and one on saturday. It’s going to be a busy few days and there’s a lot of prep that needs to happen today and ostensibly tomorrow before and after the first experiment. Since I am in the habit of sharing my process, I figured it would be a good idea to talk about how we set up for experiments. I’ve spoken often about how long it takes, but I can’t find a recent example of going over what that actually entails. This will be… interesting.

(more…)On the design of experiments

Okay, well a lot has happened today and I’m not even sure where to start! I guess the main thing I want to write about today, despite being exhausted, is planning. I plan everything, I probably write more about my plans than anything else now that I’m done taking my required classes. It’s no secret, I love planning because having a plan means knowing what to do when that plan goes sideways. It means knowing what to look for before a plan goes sideways. Most importantly, it lets you know you’re on the right track.

(more…)A successful first test

Sometimes you have to work weekends, especially if you end up working full-time. But sometimes you have to do what you need to do if you want to graduate on time. Hint, I really want to graduate on time, but then again time is relative. Grad school is time intensive and as I’ve talked about in the past, you have to do the work when you find the time and energy. Yesterday, I made time to test some new equipment and it went well!

(more…)The first equipment test!

It’s been literally months in the making, but we finally have the equipment I need to start my dissertation and even though it’s the weekend, I’m making a special trip to campus (it’s been like a month since I’ve been there) to test out the equipment and get things ready for my first set of dissertation experiments! I’m hoping things go smoothly so that I can get the data needed, but as with all things, some assembly is required.

(more…)A small step towards graduation

Well the hurry up and wait phase is over. As of today I now have the equipment I need for my dissertation experiments so it should be full speed ahead with data collection. Now I could do data collection and analysis simultaneously, but I don’t think I want to do that since I’m in a hurry and I’ll explain why in a minute, but now that I have equipment we’ve made a small step toward finishing this project. There are some things still lingering, but I think we’re on the right track forward. The only question is, can I get it done in time to graduate in the spring?

(more…)Small beginnings

Sometimes big things have small beginnings. Honestly I’m not even sure how to talk about it vaguely, but I’m going to give it my best shot. The short version is that I officially have my first dataset for an idea I had almost a year ago now, a “big idea” as it were. I’m confident that this will work, I haven’t had a chance to see what we got, but I did make a few initial checks to ensure everything was collecting correctly. Unfortunately, despite how monumental this feels for me, I can’t explain what we have… yet.

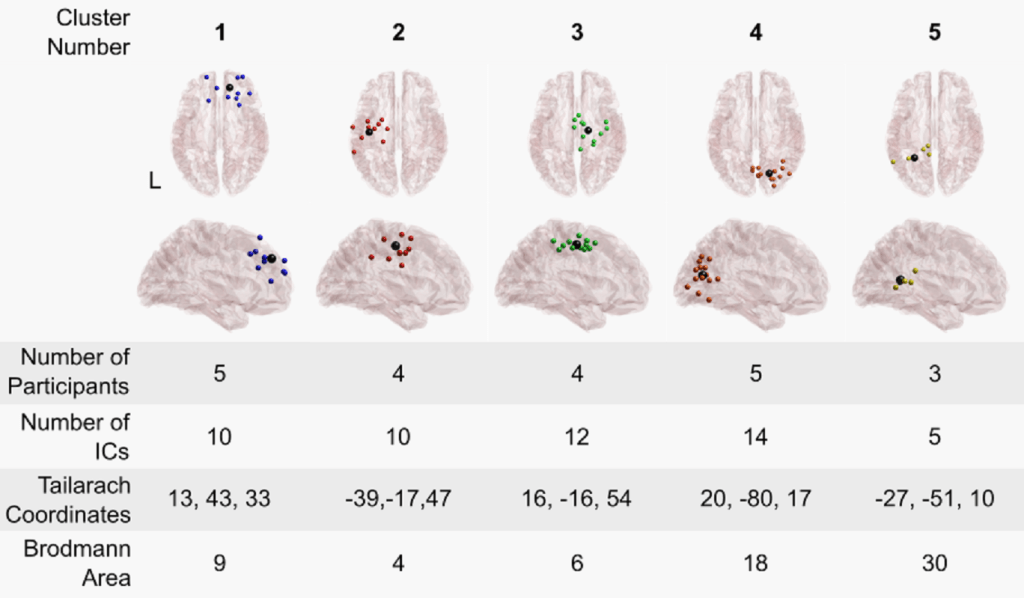

(more…)Effects of transcutaneous spinal stimulation on spatiotemporal cortical activation patterns

It’s official! My latest paper, what I’ve been calling “last paper” is now typeset and I can share my fancy videos better. I’m excited that this is finally here and since I gave a good overview of the paper (here) today I’ll discuss a bit more about why this paper was important and why the dataset was such a pain, both of those I had to shorten due to the length of the post.

(more…)More experimental prep

Things have been busy, welcome to my life. But seriously, there is a lot happening right now, most of which is top secret (shhh don’t tell anyone). But as usual, leading up to an experiment, there’s a lot of prep work that needs to happen. Mostly because things always go wrong and we want to maximize our chances for them to go right. So let’s dive into what it’s like the second time.

(more…)Data mountain

Well the week is over and I’ve barely been able to drag myself out of bed it’s been so exhausting. Unfortunately, we’re doing it all again next week too, so it’s not over yet. But the first of two test experiments are done, I say test because these don’t actually count toward our original set of experiments we’re doing later this summer. Meaning there’s going to be a lot of work coming my way, fun times.

(more…)More on experimental prep

Since we have a rather large and cumbersome set of experiments happening it’s my job to make sure they go off without too much trouble. I say too much trouble because no matter how much you plan, test, and retest, something will go wrong. Heck it may not even be wrong on our end, it may be wrong on someone else’s side, but that’s why we plan. So once again I’m talking about how we prep for our experiments.

(more…)A big revision

Ever have a really good idea, maybe even a “big idea?” Well I’ve apparently had a few, but some are bigger than others and currently we’re diving into some very intense experiments using my “big idea,” but we’re running into certain limitations. While I could happily call it here, not put the effort into doing anything about it, and just live with it, that’s not happening. For anyone who knows me, that’s not even an option. So instead big idea is getting a big revision.

(more…)Making greatness

If you build it, they will come? Okay not quite, but if you can buy it you need to make it. That seems to be the theme for the next few weeks as we’re getting ready to start several different experiments and the scope of them is massive. Not so much the experiments themselves, but everything that is going into them and everything we are going to get out of them.

(more…)Adventures in experimental design

When did people start trusting me with experimental design? I have no idea, but here we are and I’m helping come up with a series of experiments we will be doing in roughly one week… seven days. So between now and then, we have a lot of things to figure out. We have a rough idea about what will happen, but there are a lot of moving parts and it turns out we are probably missing a few things so we’re going to have to get creative if we really want to get to the bottom of things… fun times for all!

(more…)The mysterious data

I sometimes miss the days when the answers were in the back of the book. At this point I would take even just having answers that are semi related to the questions I’m trying to work out. Being a researcher is a double edged sword. On one side, for a brief moment in history you will know something that no other person in the world knows. On the other, how do you know that it’s correct? Questioning your results is an important part of research and right now, there’s more questions than answers.

(more…)The first time is always the hardest

If something can go wrong, it will go wrong. Especially when it’s the first time. I’ve got my first dataset for my PhD dissertation, but it was a battle of wills and the technology made sure to let me know, it’s in charge here. But at the end of the day, I got what I wanted, so it’s a win, even if it’s a bit of a loss too. Frankly, I’m so exhausted right now so forgive me if this post is all over the place, well more than usual.

(more…)Experiment prep

Tomorrow is my first experiment! To get ready I have a few loose ends to tie up before the big day. Mostly I just need to write everything out, make a list of items I need to set up, organize a few things, the stuff no one thinks about when they head off to an experiment. Tomorrow is going to be bumpy, but the first time always is, so best to be prepared.

(more…)An ounce of preparation

What a week. It’s only Wednesday and it feels like it’s just never going to end. There are three major events coming up this week, meaning I will be working this weekend unfortunately, but at least I made it through the day. Tomorrow will be a major event for me, dare I say bigger than my proposal defense just two days away.

(more…)The first one

Ever look up an answer in the back of the book? The problem with research is there’s no back of the book, but typically when you do an experiment you can at least glance at research surrounding your work and make sure you’re not going completely off the rails. Because I like to make my life unbelievably difficult, and because I don’t like taking small steps forward, I’m going to (hopefully) be the first to do a few things this year. But it’s not as glamorous as it sounds.

(more…)Another OR experiment

Time to dust off my scrubs, it’s back to work for me. In fact, I have been so busy with other things I don’t think I mentioned it, but today is yet another OR experiment. With how well the previous two went, I’m hopeful that this one will go well, but a somewhat alarming email from hospital-PI yesterday may throw a wrench into any plans I had.

(more…)Dear data gods

We have offered our typical sacrifice of blood, sweat, and some might even say excessive amounts of tears. Yet clearly we have offended you and we are not sure how or why. We ask that you forgive our ignorance and help us understand how we can fix this. We are humbled and sorry, we should not seek higher truths, yet we must continue.

(more…)Mad dash to the OR

Today has been a day! The trick with doing research in the OR is that the schedule for the surgeries don’t get finalized until the day before and sometimes even then things change at the last minute. I’m not sure when the schedule was finalized or if anything changed, but this morning I got a text that threw everything out of order. The best laid plans of mice and men…

(more…)Sometimes things get cancelled

Today was a big day. We got the equipment ready, the patient consented, and everyone on the clinical side of things was on the same page we were on. Things were going well. Surprisingly well in fact, that should’ve been the first sign something was going to go wrong. This time it wasn’t our fault though! Sometimes you just can’t catch a break, so maybe next time.

(more…)Off to the OR!

Tomorrow is the big day, our second experiment and while I’m a little nervous, I’m also excited to see what we can do. Last time around we had some issues… okay a lot of issues, but that’s because it was the first time we’ve ever tried something like this. This time around we worked with the team that will be in the OR with us so we know what they are doing and this time they know what we are doing. Basically we’re aiming for a whole lot less drama and a whole lot more useable data, meaning any usable data!

(more…)A unique figure design

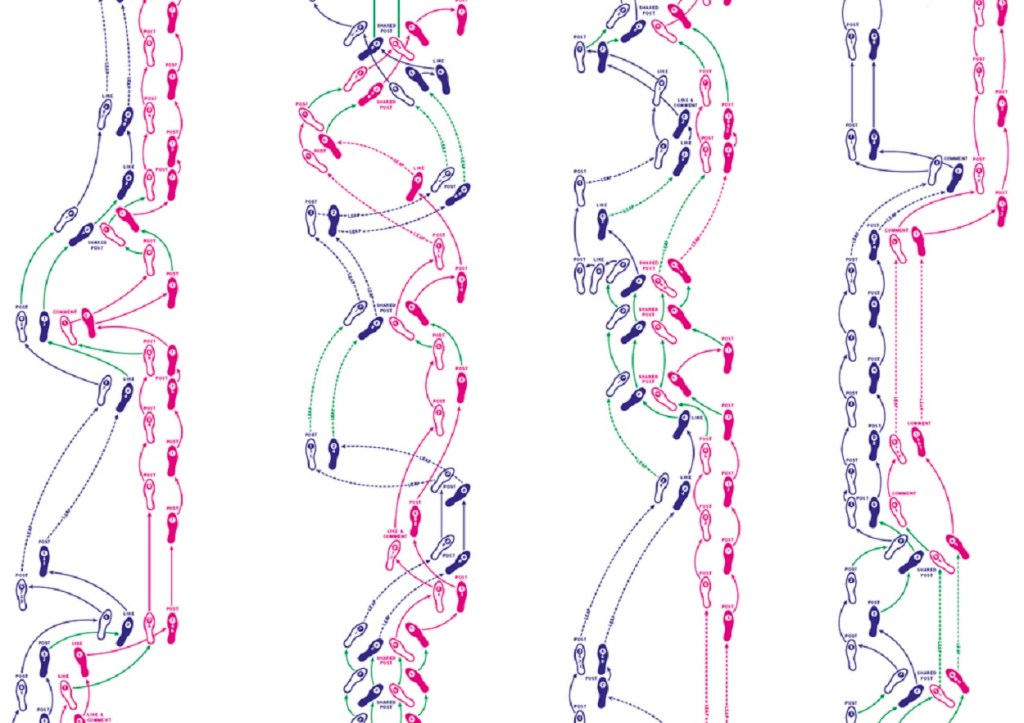

Over the past few weeks I’ve been hard a work making a new visualization for some of the data I’d recently processed. This again is for the project I won an award for (here), and while I’m not trying to brag, I’m super proud of how it came together. It was the first time I tried to do something like this and not only did my main-PI give me his approval it sounds like a lot of people from the lab were impressed with this as well. Unfortunately, I can’t quite give away what I did or how I did it, but I can share some of this.

(more…)Experimental limits

Well this is turning into a drama, but we keep having issues with the experiment. There are once again changes that need to be made, we’re four out of ten planned experiments into the project and while we’ve done the first four the same way, we keep trying to adjust our testing to a slightly different version of the protocol and it’s running into… issues to say the least. There are some things we just can’t accomplish using our testing paradigm and we have to accept that, but we still try to push those limits, even if it hurts.

(more…)Changing the experiment

For the past few days I’ve talked about the importance of experimental design. Well sometimes midway through you realize a better way to do things. That was yesterday when I realized the thing we were looking for in our experiment could be found a better way. I’m not thrilled about this, but sometimes it needs to happen and I think we will have a better chance of finding what we’re after if we do it this way.

(more…)