Panic! At the lab

Ever have a day where nothing went right? We had a series of unfortunate events so we couldn’t actually do the research we wanted to do. It’s not a huge deal, but what was impressive was the amount of things that went wrong, each thing compounded and let us continue the study up until the point where we were about to start. Only then did we find out we had to stop. There was no danger and the issue was figured out after the fact, but it was an impressive feat of events.

(more…)Defining parametric tests in statistics

We’ve been throwing around the term a lot in this series. I’ve been saying in parametric statistics this, in parametric statistics that, but I kept putting off giving a definition. It’s not because it’s hard to understand, it’s just that typically when you’re doing statistics you already know if you’re using a parametric test, but because we try to make no assumptions in this series, we’re going to put this to bed once and for all. Today we’re talking about parametric statistics!

(more…)Independence in statistics

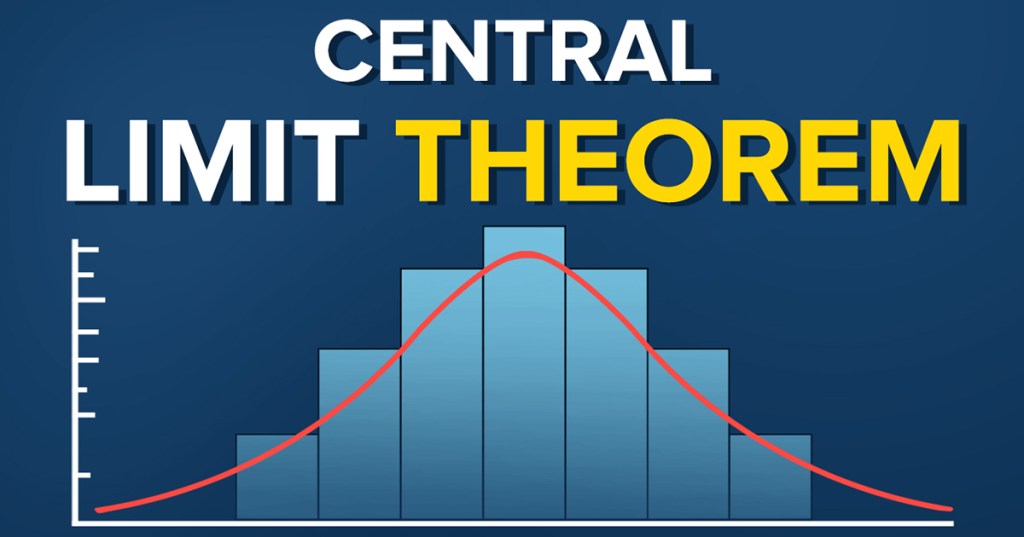

A while back we introduced the central limit theorem, it was a way to take data and make it normal (gaussian) as if by magic, which is one of the assumptions needed for parametric statistics (the most commonly used kind). Today we’re introducing another assumption, that the data are independent. The idea of independent events is probably straightforward, but it’s yet another bedrock of statistics that we should talk about in depth to help us understand why things are the way they are.

(more…)Variance in statistics

Variance, it’s one of those concepts that get’s explained briefly then you find yourself using it over and over. Now that I have a free moment, I figure it’s about time to revisit the “simple” concept and just take a minute to apricate why we have to deal with variance so often and why we try so hard to minimize it when we’re doing experiments. Just like the discussion about the mean, there’s some subtilty that goes into the idea of variance and it’s square root cousin standard deviation and we skip over it in favor of getting into more complex topics.

(more…)Fun with Rstudio

Okay, not really. Having to use R is a pain. I’m not a fan and the structure they use is very confusing to me as someone who uses MATLAB on a regular basis. I understand matrices, I regularly make and successfully work with higher dimensional matrices ( > 3, which hurts your brain to think about a 20+ dimensional matrix, but hey whatever gets the job done). R on the other hand feels foreign and the commands feel clunky.

(more…)The Bonferroni correction in statistics

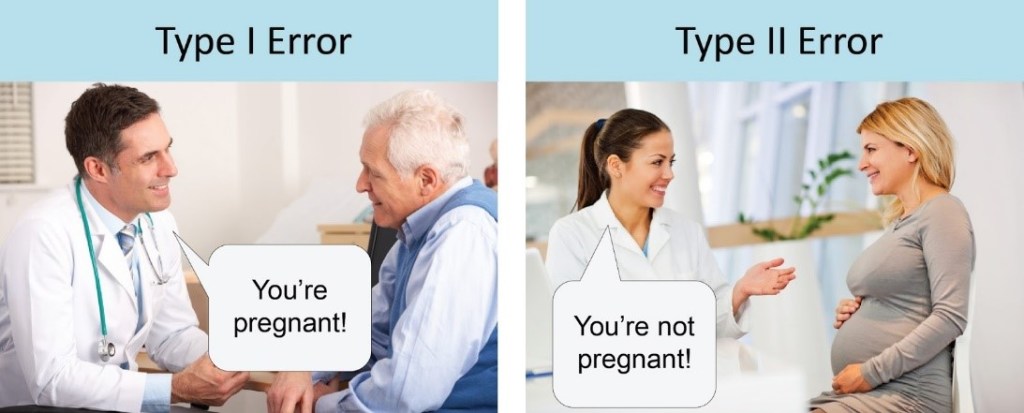

Well we’re doing it, today we’re talking about the Bonferroni correction, which is just one of many different ways to correct your analysis when you’re doing multiple comparisons. There are a lot of reasons you may want to do multiple comparisons and your privacy is our main concern so we won’t ask why. Instead we’re going to talk about how to adjust your alpha (chances of making a type 1 error) so you don’t end up making a mistake.

(more…)One-tailed vs. two-tailed tests in statistics

Sit right back because we’re telling a troubling tale of tails full of trials, twists, and turns. The real question is, will we run out of words that start with t during this post? It will be tricky, but only time will tell. When do we use a two-tailed test vs. a one-tailed test and what do tails have to do with tests anyway? With a little thought, I think we can tackle the thorny topic. In short, let’s talk tails!

(more…)The p-value in statistics

We’ve been dancing around the p-value for some time and gave it a good definition early on. The p-value is simply the probability that you’ve made a type one error, the lower the p-value the less chance you have of making a type one error, but you increase your probability that you’ll make a type two error (errors in statistics for more). However, just like with the mean, there’s more than meets the eye when it comes to the p-value so let’s go!

(more…)The z-score in statistics

Okay, time to get back to statistics, if only for today! P-value, z-score, f-statistic, there are a lot of ways to get information about the sample of data you have. Of course, they all tell you something slightly different about the data and that information is useful when you know what the heck it is even trying to tell you. For that reason we’re diving into the z-score, it’s actually one of the more intuitive (to me anyway) measurements so let’s talk about it!

(more…)The mean in statistics

Yeah it seems simple, I mean (no pun intended) the mean is just the average! Yet as with so many different things in statistics there’s more to the mean than meets the eye! We’re going to go into why the mean is important, why it’s our best guess, why it may not always be your best option, and why we work so hard to find the mean sometimes! It seems simple, but I promise today we’re answering a lot of the big “why’s” in statistics, so let’s go!

(more…)The Tukey test in statistics

No, not turkey, Tukey, although they are pronounced very similar (depending on who you ask I guess? I’ve seen people pronounce it “two-key”). Any way, today we’re saving our job and the wrath that comes with failure. The mad scientist boss of ours tasked us with testing mind control devices and determining statistically which one (if any) worked. After the last failure, we now had four new devices to test, so we couldn’t use the same method as before. However, an assistant’s work is never done, we didn’t finish the job! That’s what we’re going to do today.

(more…)The ANOVA in statistics

Our mad scientist is back and this time they are not taking any chances! After statistical failure in the last example, they created not just one, but four mind control prototypes! We’ve been tasked with determining if they are working or face certain DOOOOOOOM! Sure, working for a mad scientist can be stressful, but we can do this… right? I’ve been dreading this post and you’ll see why, there’s a lot to cover before we solve the mystery, so let’s dive into it!

(more…)Significance in statistics

That feeling when your p-value is lower than your alpha, aww yeah! But what does it really mean? It’s one thing to say there is significance and on the surface it means the two things are different “enough” to be considered two things, but I think there’s a simpler way to explain it. So today we’re going to talk about what significance actually means in the practical sense. Maybe it’s super obvious, but it never hurts to state it anyway.

(more…)The f-test in statistics

Yep, we’re getting right back into it. I’m still working out things from yesterday, so we can just talk more statistics. This will be an interesting one and hopefully it will be pretty straightforward. The f-test, which in this case is really the f-test to compare two variances. You may have guessed, but the t-test uses the t-distribution (sort of like the normal), well the f-test uses the f-distribution, which is nothing like the normal! Let’s dive in, shall we?

(more…)Confidence intervals in statistics

Well since our mad scientist from yesterday’s post is on a short break, today we’re going to fill in some of the gaps that post brought into view. First up is the confidence interval. There are some subtle points here, so this should help clarify a few things that may not have been clear yesterday. We’re going to do a somewhat deep dive into what the heck we’re doing when we talk confidence interval and why the standard deviation of our data is important in determining the values.

(more…)The t-test in statistics

Welcome fellow mad scientist enthusiasts, the last time we talked statistics, we found ourselves in an interesting situation and we need to figure out if the mind control device that was developed is actually working. We introduced the idea of a two population problem and today we’re going to use something called a t-test to determine if our mad scientist succeeded.

(more…)Two populations in statistics

As a mad scientist, or maybe just a grumpy scientist, you want to test a new mind control technique! To do this you decide that you want to test this works by having people select one of two objects set in front of them. *Insert evil laugh* Using your mind control technique you want your unwitting participants pick the object on the left. You don’t get 100% success, but suspect it’s working, how do we know for sure?

(more…)A fair coin in statistics

It has been a busy day, but the show must go on so to speak and I’m here today to tell you I have a nice shiny new coin for us to flip! The catch you may ask? Well it could be a fair coin or I may have just swapped it out for a coin that was not fair! Our goal is to see if I’m being sneaky and to do that we’re going to need some statistics!

(more…)The central limit theorem in statistics

Today we’re gonna push it to the limit! The central limit theorem that is. It’s a cornerstone in statistics and the short and dry version is that it lets us turn any distribution we have into a normal distribution. If it wasn’t for the central limit theorem statistics would hurt far worse than it does now (speaking as someone taking a stats class now). For the longer version we need to discuss why a normal distribution is needed, why we prefer to work with them!

(more…)Effect size in statistics

We’ve been talking statistics for the past few days and today we’re talking effect size. The short explanation is effect size is the difference between two conditions! The bigger the effect size, the easier it is to tell the two conditions apart, easy… right? There’s a lot that goes into determining effect size, after all it’s hard to know what your effect size is without having some prior knowledge about what you’re groups look like, so let’s go into some detail.

(more…)Errors in statistics

Everyone makes mistakes, that’s okay! In day to day life there are a lot of different ways you and I could make mistakes. In statistics however, there are just two ways for you to make a mistake. That may sound like a good deal, but trust me when I say two ways to make a mistake is two too many. To think, you spent all that time picking the right statistical test, did the experiment, analyzed the data, just to make an error in the end. Don’t worry, it happens to the best of us, but knowing what they are will help you prevent them!

(more…)The hypothesis in statistics

As promised today we’re talking statistics for experiments! It’s more interesting than it sounds… okay it’s exactly as exciting as it sounds. Depending on who you are that’s a lot or not at all. No matter where on the spectrum you fall, knowing how it works is useful. So we’re starting at the beginning and discussing what a hypothesis is and how we test it.

(more…)The last PhD requirement

We’re already at the end of the month, how the hell did that happen? It’s been close to a month and a half since the term started and it feels like it’s flying by. I realized that when I first started this project I covered a lot of the stuff I was learning at the time. In fact one of my previous class notes posts was in my top 10 highest viewed blog posts for 2020. Somewhere along the line I stopped doing that, so today we’re going to talk about what I’m taking this term, why I’m taking it, and why I’ll probably be adding a few step by step instructions for how you can do what I’m learning too in some of my upcoming posts.

(more…)The zero factorial

Today I’m doing some stats homework and was reminded of an odd quark in math, the zero factorial. It’s not very intuitive and I absolutely love weird math, so I thought I would share the fun. I never said I was normal… Anyway today we’re going to go over why 0! (zero factorial) is so interesting!

(more…)